Proceedings of the International Computer Music Conference

Abstract:

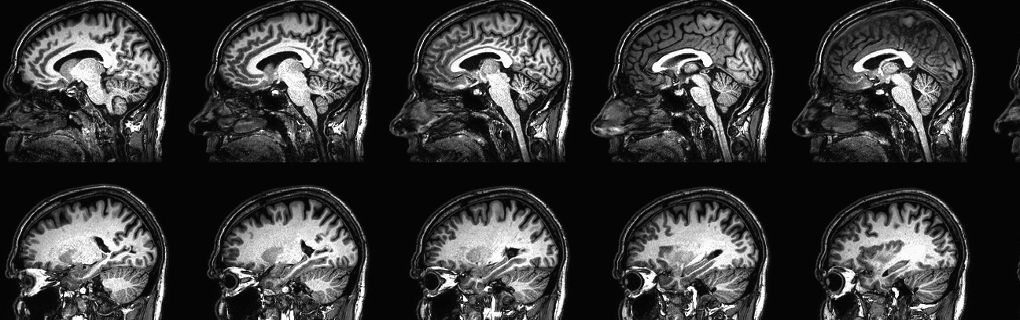

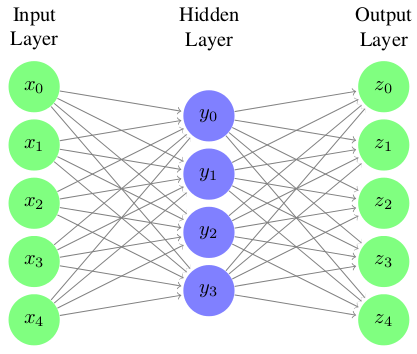

With an optimal network topology and tuning of hyperparameters, artificial neural networks (ANNs) may be trained to learn a mapping from low level audio features to one or more higher-level representations. Such artificial neural networks are commonly used in classification and re-gression settings to perform arbitrary tasks. In this work we suggest re-purposing auto-encoding neural networks as musical audio synthesizers. We offer an interactive musical audio synthesis system that uses feed-forward artificial neural networks for musical audio synthesis, rather than discriminative or regression tasks. In our system an ANN is trained on frames of low-level features. A high level representation of the musical audio is learned though an auto-encoding neural net. Our real-time synthesis system allows one to interact directly with the parameters of the model and generate musical audio in real time. This work therefore proposes the exploitation of neural networks for creative musical applications.

- Log in to post comments